Frequently Asked Questions¶

Note

Some FAQ answers may use C++ code snippets; however, the answers apply to the python bindings SDK too.

For a list of community driven FAQs and answers, please visit our community forums.

How many threads does the SDK use for inference?¶

The SDK inference engines can use up to 8 threads each, but this is dependant on the number of threads your system has.

The Trueface::SDK::batchIdentifyTopCandidate() function is capable of using all the threads on your machine, depending on the number of probe Faceprints provided.

How can I reduce the number of threads used by the SDK?¶

If you need to reduce the number of threads utilized by the SDK, this can be achieved using the OpenMP environment variable OMP_NUM_THREADS.

The SDK has been optimized to reduce latency. If you instead want to increase throughput, then limit the number of threads and instead run inference using multiple

instances of the SDK in parallel.

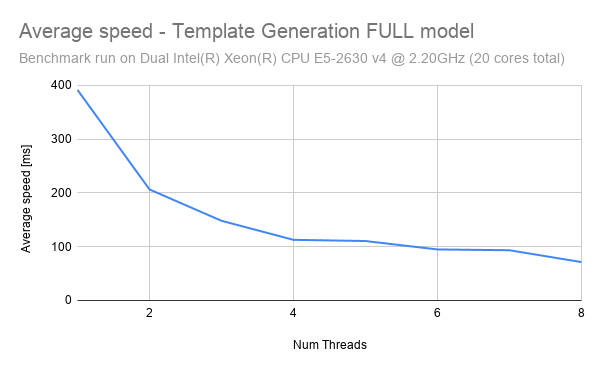

The following graph shows the impact of threads on inference speed.

As can be seen, the graph follows an exponential decay pattern, with the greatest reduction in latency being experienced when moving from 1 thread to 2 threads. The significance of this is that we can actually enforce a reduced thread count in order to increase the CPU throughput. Consider an example where we have a CPU with 8 threads.

Scenario 1: Latency Optimized: In this scenario, we have 1 instance running inference using all 8 threads. Using the chart above, we can approximate the latency to be 75ms - given a single input image, inference can be performed in 75ms. Therefore, this scheme optimizes to reduce latency as much as possible. However, if the instance is provided with 100 input images to process, then it will take a total of 7.5s (100 images * 75ms ) to run inference.

Scenario 2: Throughput Optimized: In this scenario, we have 8 instances running inference using only 1 thread each. Using the chart above, we can approximate the latency to be 400ms - given a single input image, inference will be performed in 400ms. Although this seems like a bad tradeoff compared to scenario 1, scenario 2 shines when we have many input samples. If the instances are provided with 100 input images to process, then it will take a total of 5s (100 images * 400ms / 8 instances) to run inference.

Hence, by running more instances in parallel and reducing the number of threads per instance, we have increased the latency but also increased the overall throughput.

How can I run inference with multiple instances of the SDK on a single CPU?¶

Since a single instance of the SDK can use up to 8 threads for inference, most 8 thread CPUs will work optimally with only a single instance of the SDK. Running multiple instances of the SDK will utilize more than 8 threads total and will result in time slicing which will slow things down. If you want to use multiple instances, then you must reduce the number of threads used for inference (refer to “How can I reduce the number of threads used by the SDK?” question above). So for example, if you limit the number of threads used for inference to just 4, then you can optimally run 2 instances of the SDK on your 8 thread CPU.

How can I increase throughput?¶

CPU: Throughput can be increased on CPU by running multiple instances of the SDK in parallel, and allocated fewer threads to each instance. See the question titled “How can I reduce the number of threads used by the SDK?” for more information.

GPU: Throughput can be increased on GPU by using batching. Currently our face recognition and mask detection modules supports batch inference. You can also increase throughput (and decrease latency) by using images pre-loaded in GPU (ex. decode video stream directly into GPU ram).

Is the SDK threadsafe?¶

In CPU only mode, the SDK is completely threadsafe. In GPU mode, the SDK is not threadsafe.

What architecture should I use when I have multiple camera streams producing lots of data?¶

For simple use cases, you can connect to a camera stream and do all of the processing directly onboard a single device.

The following approaches are ideal for situations where you have tons of data to process.

The first approach to consider is a publisher/subscriber architecture.

Each camera producing data should push the image into a shared queue, then a worker or pool of workers can consume the data in the queue and perform the necessary operations on the data using the SDK. For a highly scalable design, split up all the components into microservices which can be distributed across many machines (with many GPUs) and use a Message Queue system such as RabbitMQ to facilitate communication and schedule tasks for each of the microservices. For maximum performance, be sure to use the GPU batch inference functions (see batch_fr_cuda.cpp sample app) and use images in GPU RAM (see face_detect_image_in_vram.cpp sample app).

Another approach is to process the camera stream data directly at the edge using embedded devices then either run identify at the edge too, or if dealing with massive collections, send the resulting Faceprints to a server / cluster of servers to run the identify calls.

Refer to the “1 to N Identification” tab (on the left) for more information on this approach.

Finally, you can manage a cluster of PTOP instances using kubernetes or even run the instances on auto scaling cloud servers and post images directly to those for processing.

These are just a few of the popular architectures you can follow, but there are many other correct approaches.

Points to note: Each SDK instance can use up to 8 threads for inference on CPU. Being mindful of this, only create as many CPU workers (each with their own SDK instance) as your CPU can support (on most 8 thread CPUs you should only have one instance of the CPU SDK running), otherwise performance will be negatively impacted. Refer to “How can I reduce the number of threads used by the SDK?” question above for more details.

What is the difference between the static library and the dynamic library?¶

Functionality wise, there is no difference. Each provides it’s own benefits and disadvantages, which you can learn more about here.

What hardware does the GPU library support?¶

The x86-64 GPU enabled SDK supports NVIDIA GPUs with GPU Compute Capability 5.2+, and currently supports CUDA 11.2. The AArch64 GPU enabled SDK supports NVIDIA GPUs with GPU Compute Capability 5.3+, and currently supports CUDA 10.2 (default on NVIDIA Jetson devices).

You can determine your GPU Compute Capability here.

What is the TensorRT engine file and what is it used for?¶

As of V1.0 of the SDK, TensorRT is used for GPU inference for new models such as Trueface::FacialRecognitionModel::TFV6.

The TensorRT engine file is an intermediate format of the model which is optimized for the target hardware based on the selected GPU configuration parameters.

If GPU inference is enabled, the SDK will search for the existence of this engine file in the directory specified by Trueface::ConfigurationOptions.modelPath.

If the engine file is not found, the SDK will generate one and save it to disk (once again, at the path specified by Trueface::ConfigurationOptions.modelPath).

If the engine file does already exist for the existing GPU options, the generation process is bypassed and the engine file is loaded from disk.

Please realize that the engine generation process can be slow and take a few minutes.

Note, changing the GPU configuration options such as Trueface::GPUModuleOptions.optBatchSize or Trueface::GPUModuleOptions.precision will cause the engine file to be regenerated.

Additionally, the TensorRT engine file is locked to your GPU type. You can share generated engine files across devices only if they have the same GPU type.

Why is my license key not working with the GPU library?¶

The GPU library requires a different token which is generally tied to the GPU ID. Please speak with a sales representative to receive a GPU token.

Why does the first call to an inference function take much longer than the subsequent calls?¶

Our modules use lazy initialization meaning the machine learning models are only loaded into memory when the function is first called instead of on SDK initialization.

This ensure minimal memory overhead from unused modules. When running speed benchmarks, be sure to discard the first inference time.

Alternatively, if you know you will be using a module, you can choose to initialize it in advance using the Trueface::InitializeModule configuration option.

This option initializes specified modules in the SDK constructor instead of default lazy initialization.

Why was setImage replaced by preprocessImage?¶

The Trueface::SDK::setImage() function introduced state to the SDK which required the user to be mindful of thread safety when operating on a single SDK instance from multiple threads.

By removing state from the SDK, the CPU SDK is now completely threadsafe.

Additionally, the user of the SDK can now employ more versatile design patterns.

For example, the user can consume video from one thread, call the Trueface::SDK::preprocessImage() method and enqueue the Trueface::TFImage and then consume them from the queue for processing from a separate thread.

How do I use the python bindings for the SDK?¶

In order to run the sample apps, you must place the python bindings library in the same directory as the python script.

Alternatively, you can add the directory where the python bindings library resides to your PYTHONPATH environment variable.

You may also need to add the directory where the supporting shared libraries reside to your LD_LIBRARY_PATH environment variable.

All you need to do from there is add import tfsdk in your python script and you are ready to go.

How do I choose a similarity threshold for face recognition?¶

Navigate here and use the ROC curves to select a threshold based on your use case. Refer to this blog post for advice on reading ROC curves.

What are the differences between the face recognition models?¶

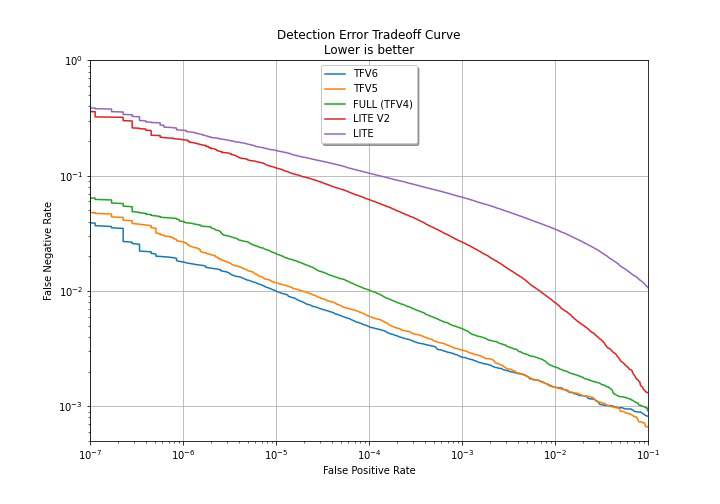

The Trueface::FacialRecognitionModel::LITE model is our most lightweight model which has been optimized to minimize latency and resource usage (RAM). It is also our lowest accuracy model.

It is therefore advised that this model only be used for low accuracy 1 to 1 matching use cases, embedded devices with limited computing ability, or prototyping.

The Trueface::FacialRecognitionModel::LITE_V2 model is another lightweight model which has improved accuracy over the previous LITE model, though does have increased inference time (see our benchmarks page for more details).

It has a 39% reduction in False Negative Rate (FNR) at a False Positive Rate (FPR) of 10^-4 and a 61% reduction in FNR at a FPR of 10^-3 compared to the previous LITE model.

This model is ideal for embedded systems or lightweight CPU only deployments, prototyping, and some 1 to 1 matching use cases.

The Trueface::FacialRecognitionModel::FULL model (TFV4) has better accuracy than the Trueface::FacialRecognitionModel::LITE model, but also has greater inference time and RAM usage.

It is advised for 1 to N use cases, and we suggest that you run this model using a GPU.

Note, TFV4 has now been deprecated and replaced by TFV5 which has better performance.

Despite this, we will continue providing support for TFV4 for clients with existing collections.

The Trueface::FacialRecognitionModel::TFV5 model is the replacement for the Trueface::FacialRecognitionModel::FULL (TFV4) model, and offers improved accuracy.

It has a 43% reduction in FNR compared to TFV4 (at a FPR of 10^-5).

It is currently our most accurate face recognition model for unmasked face images.

This model has similar inference time and resource usage to the Trueface::FacialRecognitionModel::FULL model, so it is also advised you use this model with a GPU.

This model is ideal for 1 to N use cases, or use cases that require the highest accuracy.

The Trueface::FacialRecognitionModel::TFV6 model is currently our most accurate face recognition model for masked face images.

Use Trueface::FacialRecognitionModel::TFV6 in situations where it is anticipated that the probe image contains a masked face (for 1 to N search), or where one or both face images are masked (for 1 to 1 comparisons).

This model has comparable inference time to Trueface::FacialRecognitionModel::TFV5.

Below is a Detection Error Tradeoff graph which shows the difference in performance between the three models. The DET graph plots the False Negative Rate against the False Positive Rate. A flatter and lower curve indicates better performance.

Are Faceprints compatible between models?¶

Faceprints are not compatible between models.

That means that if you have a collection filled with Trueface::FacialRecognitionModel::FULL model Faceprints, you will not be able to run a query against that collection using a Trueface::FacialRecognitionModel::TFV5 Faceprint.

The SDK has internal checks and will throw and error if you accidentally try to do this.

How can I upgrade my collection if is filled with Faceprints from a deprecated model?¶

As of right now, there is no way to upgrade your existing Faceprints to a new model (such as from Trueface::FacialRecognitionModel::FULL to Trueface::FacialRecognitionModel::TFV5).

For this reason, we will continue providing support for deprecated models so that if you have an existing collection containing Faceprints from a deprecated model, you do not need to worry about that model being removed in future releases.

With this in mind, we advise you to save your enrollment images in a database of your own choosing. That way, when we do release a new and improved face recognition model, you can re-generate Faceprints for all your images using the new model and enroll them into an updated collection.

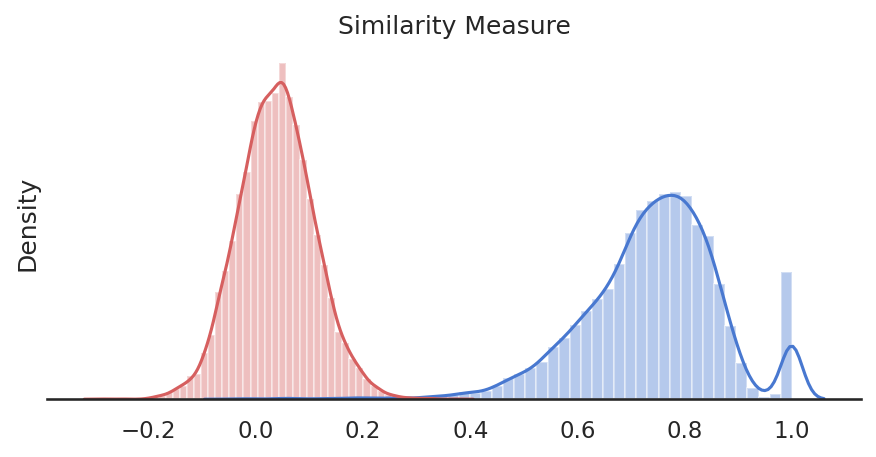

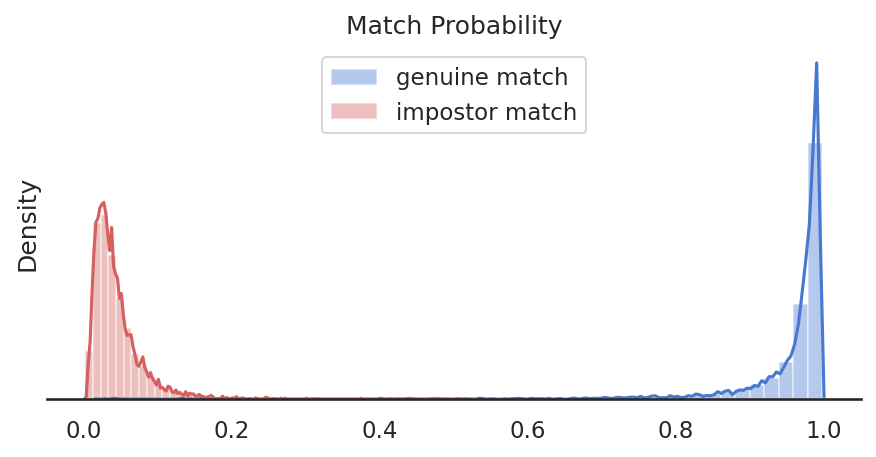

What is the difference between similarity score and match probability?¶

The similarity score refers to the mathematical similarity between two feature vectors in vector space. The similarity score values can range from -1 to 1. A regression model is used to convert this similarity score to match probability, which is between 0 and 1, making it more human readable. Ultimately, the two metrics represent the same thing. You should use the similarity score when thresholding for face recognition.

How do createDatabaseConnection and createLoadCollection work?¶

Trueface::SDK::createDatabaseConnection() is used to establish a database connection.

This must always be called initially before loading Faceprints from a collection or enrolling Faceprints into a new collection,

unless the Trueface::DatabaseManagementSystem::NONE options is being used, in which case it doesn’t need to be called and you can go straight to Trueface::SDK::createLoadCollection().

Next, the user must call Trueface::SDK::createLoadCollection() and provide a collection name.

If a collection with the specified name already exists in the database which we have connected to, then all the Faceprints in that collection will be loaded from the database collection into memory (RAM).

If no collection exists with the specified name, then a new collection is created. From here, the user can call Trueface::SDK::enrollFaceprint() to save Faceprints to both the database collection and in-memory (RAM) collection.

If using the Trueface::DatabaseManagementSystem::NONE, a new collection will always be created on a call to this function, and the previous loaded collection - if this is not the first time calling this function - will be deleted (since it is only stored in RAM).

From here, the 1 to N identification functions such as Trueface::SDK::identifyTopCandidate() can be used to search through the collection for an identity.

Why are no faces being detected in my large images?¶

If the faces in your images are very large, then the face detector may not be able to detect the face using the default Trueface::ConfigurationOptions.smallestFaceHeight parameter.

The face detector has a detection scale range of about 5 octaves. Ex. A Trueface::ConfigurationOptions.smallestFaceHeight of 40 pixels yields the detection scale range of ~40 pixels to 1280 (=40x2^5) pixels.

If you are dealing with very large images, or with dynamic input images, it is best to set the Trueface::ConfigurationOptions.smallestFaceHeight parameter to -1.

This will dynamically adjusts the face detection scale range from image-height/32 to image-height to ensure that large faces are detected in high resolution images.

How can I speed up face detection?¶

You can speed up face detection by setting the Trueface::ConfigurationOptions.smallestFaceHeight parameter appropriately.

Increasing the Trueface::ConfigurationOptions.smallestFaceHeight will result in faster inference times, so try to set the Trueface::ConfigurationOptions.smallestFaceHeight as high as possible for your use case if speed is a critical requirement.

Why is GPU face detection slow for images of varying sizes?¶

The GPU face detector works best when all the input images have the same dimensions. This will be the case if consuming images from a video stream. However, in some circumstances, you may need to run face detection over a collection of images of varying sizes. With the GPU face detector, any time the image dimensions are changed, there will be an initial slowdown on inference as the GPU buffers need to be reallocated. In order to avoid these slowdowns and speed things up, consider resizing the images to a fixed size. Apply letterbox padding in order to maintain the image aspect ratio even after resizing.

What does the frVectorCompression flag do? When should I use it?¶

The Trueface::ConfigurationOptions.frVectorCompression flag is used to enable optimizations in the SDK which compresses the feature vector and improve the match speed for

both 1 to 1 comparisons and 1 to N identification.

The flag should be enabled when matching is very time critical, or in environments with limited memory or disk space as it will reduce the feature vector memory footprint.

You can find a list of up to date benchmarks here.

The trade off to using this flags is that it will cause a very slight loss of accuracy. However, the method has been optimized to ensure this loss is extremely minimal.

What does a typical 1 to N face recognition pipeline involve?¶

Although there are various approaches you can take depending on your requirements and architecture (ex. largest face only vs all detected faces), the following pipelines should provide you with some guidance.

Offline steps - Enrollment:

The offline steps involve enrolling the Trueface::Faceprint into our database of choosing.

We must therefore perform the following steps:

Trueface::SDK::preprocessImage()Trueface::SDK::detectLargestFace()Trueface::SDK::extractAlignedFace()Perform the various checks described here

Trueface::SDK::getFaceFeatureVector()Trueface::SDK::enrollFaceprint()

Online steps - Identification:

The online steps involve querying our database using probe Trueface::Faceprint to determine if there is a potential match candidate.

We must therefore perform the following steps:

Trueface::SDK::preprocessImage()Trueface::SDK::detectFaces()For either the largest face only or every detected face:

Trueface::SDK::getFaceFeatureVector()Trueface::SDK::identifyTopCandidate()