Face Detection and Recognition¶

-

SDK.detect_faces(self: tfsdk.SDK) → List[Trueface::FaceBoxAndLandmarks]¶ Detect all the faces in the image and return the bounding boxes and facial landmarks. This method has a small false positive rate. To reduce the false positive rate to near zero, filter out faces with score lower than 0.90.

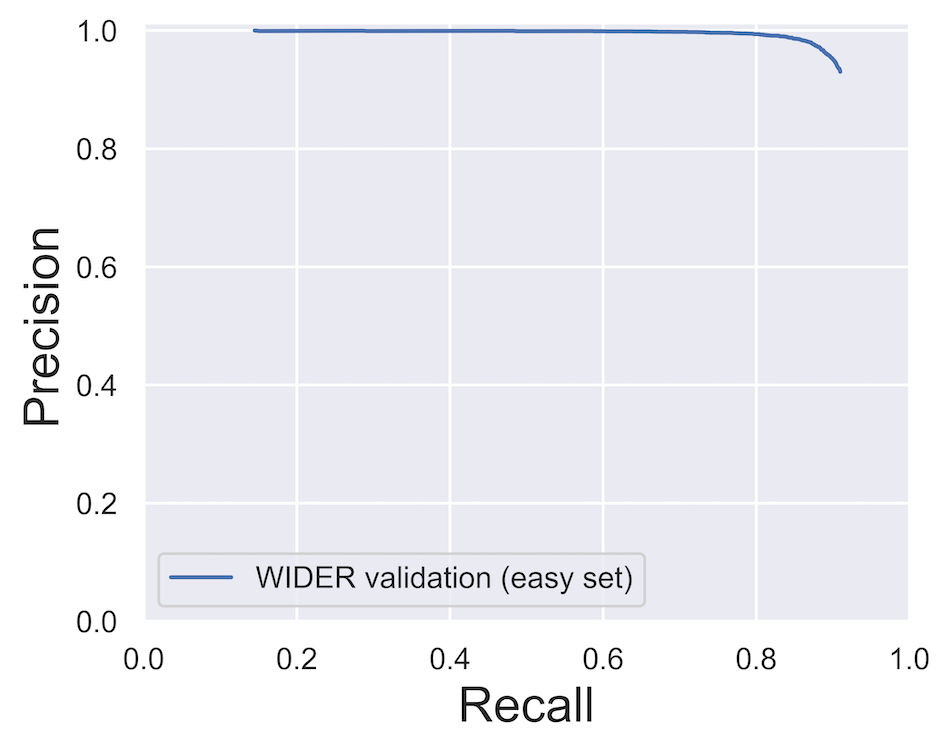

The recall and precision of the face detection algorithm on the WIDER FACE dataset:

The effect of face height on similarity score:

-

SDK.detect_largest_face(self: tfsdk.SDK) → Tuple[bool, Trueface::FaceBoxAndLandmarks]¶ Detect all the largest face in the image. Returns false if no faces are detected.

-

SDK.get_face_landmarks(self: tfsdk.SDK, face_box_and_landmarks: Trueface::FaceBoxAndLandmarks) → Tuple[Trueface::ErrorCode, List[Trueface::Point<int>]]¶ Obtain the 106 face landmarks.

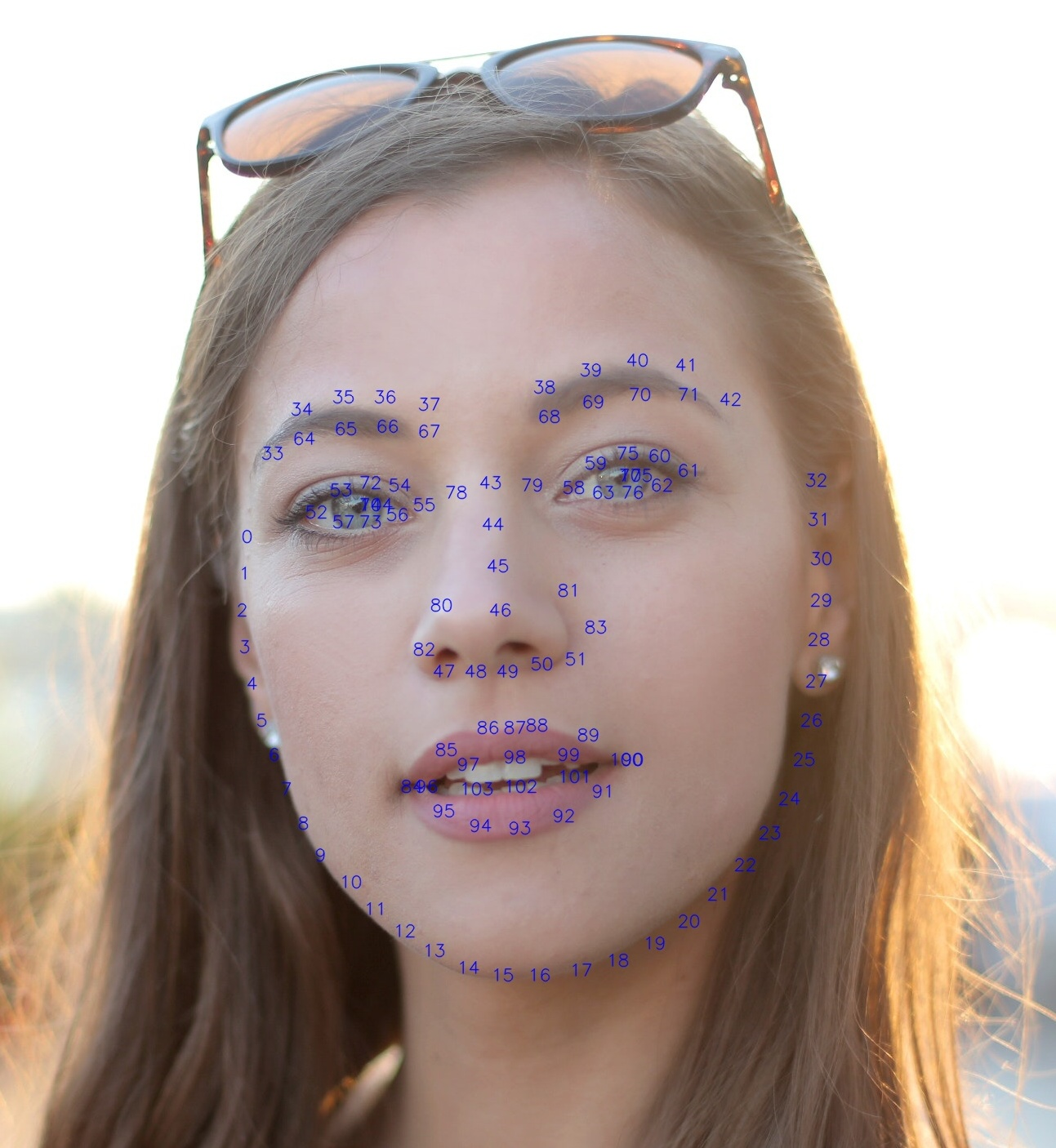

The order of the face landmarks:

-

SDK.extract_aligned_face(*args, **kwargs)¶ Overloaded function.

extract_aligned_face(self: tfsdk.SDK, face_box_and_landmarks: Trueface::FaceBoxAndLandmarks, margin_left: int = 0, margin_top: int = 0, margin_right: int = 0, margin_bottom: int = 0, scale: float = 1.0) -> numpy.ndarray[uint8]

Extract the aligned face in Numpy array of 112x112 pixels. Changing the margins and scale will change the face chip size. If using the face chip with Trueface algorithms (ex face recognition), do NOT change the default margin and scale values.

extract_aligned_face(self: tfsdk.SDK, buffer_pointer: int, face_box_and_landmarks: Trueface::FaceBoxAndLandmarks, margin_left: int = 0, margin_top: int = 0, margin_right: int = 0, margin_bottom: int = 0, scale: float = 1.0) -> Trueface::ErrorCode

Extract the aligned face in an array of 112x112 pixels. Changing the margins and scale will change the face chip size. If using the face chip with Trueface algorithms (ex face recognition), do NOT change the default margin and scale values.

-

SDK.save_face_image(self: tfsdk.SDK, face_image: numpy.ndarray[uint8], filepath: str) → None¶ Store the face image in JPEG file. Face image must be have size of 112x112 pixels (default extract_aligned_face margin and scale values).

-

SDK.get_face_feature_vector(*args, **kwargs)¶ Overloaded function.

get_face_feature_vector(self: tfsdk.SDK, aligned_face_image: numpy.ndarray[uint8]) -> Tuple[Trueface::ErrorCode, Trueface::Faceprint]

Return the corresponding feature vector for the given aligned face image. Face image must be have size of 112x112 pixels (default extract_aligned_face margin and scale values).

get_face_feature_vector(self: tfsdk.SDK, face_box_and_landmarks: Trueface::FaceBoxAndLandmarks) -> Tuple[Trueface::ErrorCode, Trueface::Faceprint]

Extract the face feature vector from the face box.

-

SDK.get_face_feature_vectors(*args, **kwargs)¶ Overloaded function.

get_face_feature_vectors(self: tfsdk.SDK, aligned_face_images: List[numpy.ndarray[uint8]]) -> Tuple[Trueface::ErrorCode, List[Trueface::Faceprint]]

Extract the face feature vectors from the aligned face images.This batch processing method increases throughput on platforms such as cuda.

get_face_feature_vectors(self: tfsdk.SDK, aligned_face_images: List[int]) -> Tuple[Trueface::ErrorCode, List[Trueface::Faceprint]]

Extract the face feature vectors from the aligned face images.This batch processing method increases throughput on platforms such as cuda.

-

SDK.get_largest_face_feature_vector(self: tfsdk.SDK) → Tuple[Trueface::ErrorCode, Trueface::Faceprint]¶ Detect the largest face in the image and return the corresponding feature vector. Note, if no face is detected in the image, then ERRORCODE.NO_FACE_IN_FRAME will be returned.

-

static

SDK.faceprint_to_json(faceprint: Trueface::Faceprint) → str¶ Convert a Faceprint into a json string.

-

static

SDK.json_to_faceprint(json_string: str) → Tuple[Trueface::ErrorCode, Trueface::Faceprint]¶ Create a Faceprint from a json string.

-

SDK.get_similarity(self: tfsdk.SDK, feature_vector_1: Trueface::Faceprint, feature_vector_2: Trueface::Faceprint) → Tuple[Trueface::ErrorCode, float, float]¶ Compute the similarity of the given feature vectors. Return match probability and similarity score (in that order)

-

SDK.estimate_face_image_quality(self: tfsdk.SDK, aligned_face_image: numpy.ndarray[uint8]) → Tuple[Trueface::ErrorCode, float]¶ Estimate the image quality for face recognition.

-

SDK.estimate_head_orientation(self: tfsdk.SDK, face_box_and_landmarks: Trueface::FaceBoxAndLandmarks) → Tuple[Trueface::ErrorCode, float, float, float]¶ Estimate the head orientation using the detected facial landmarks. Returns yaw, pitch, roll (in that order) in radians

The accuracy of this method is estimated using 1920x1080 pixel test images. A test image:

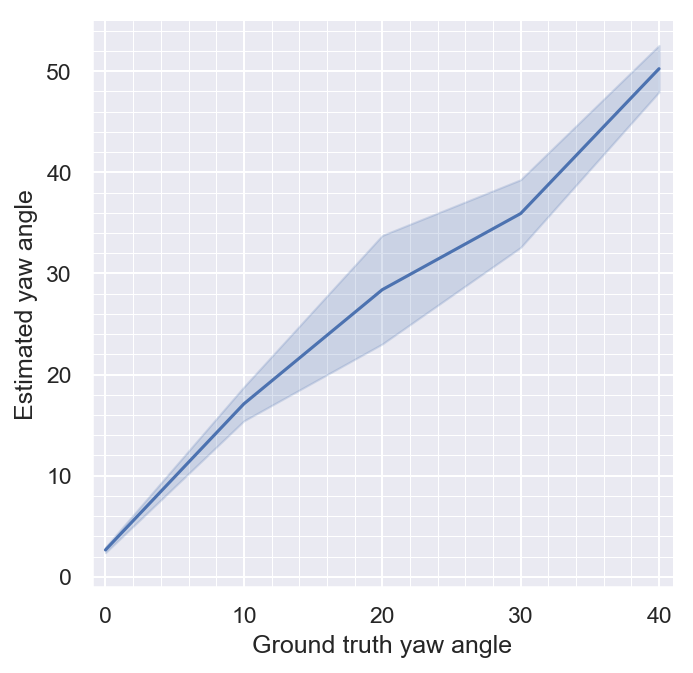

The accuracy of the head orientation estimation:

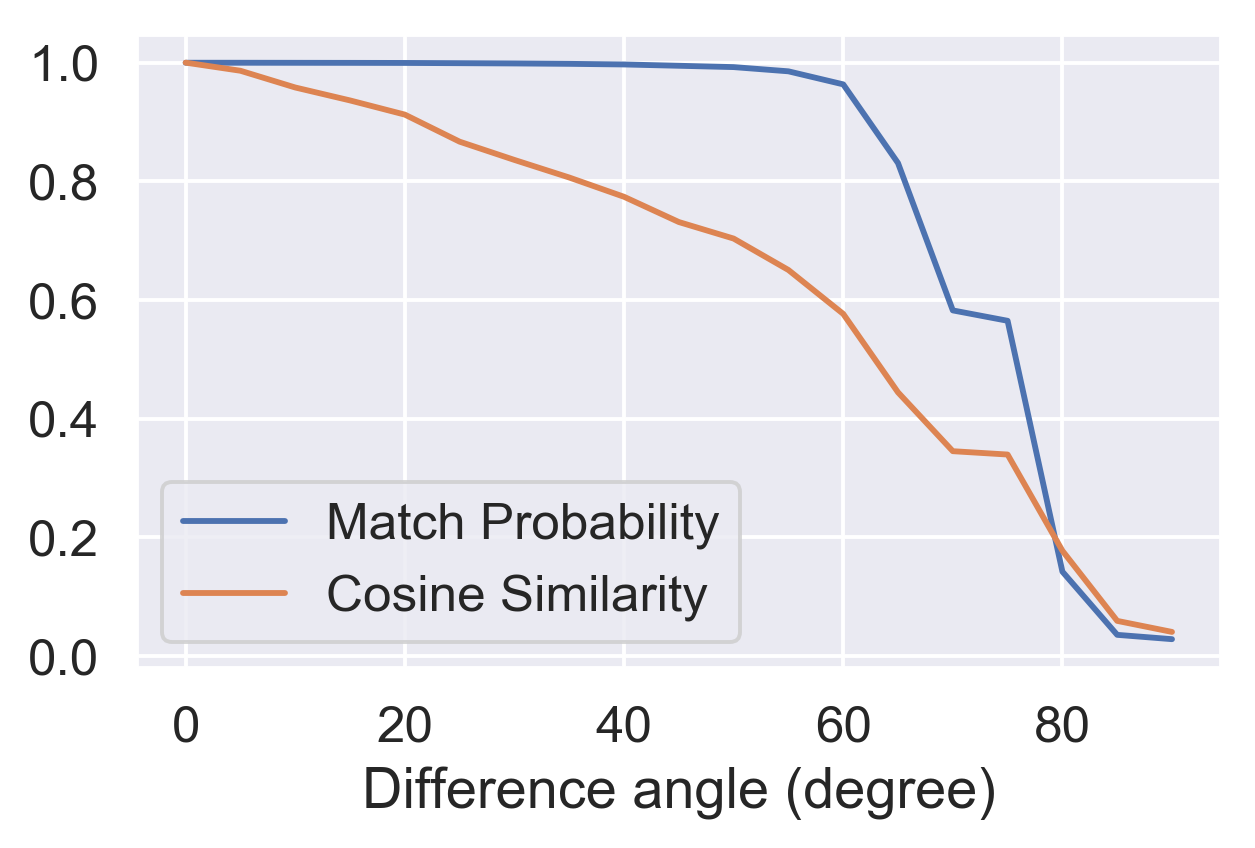

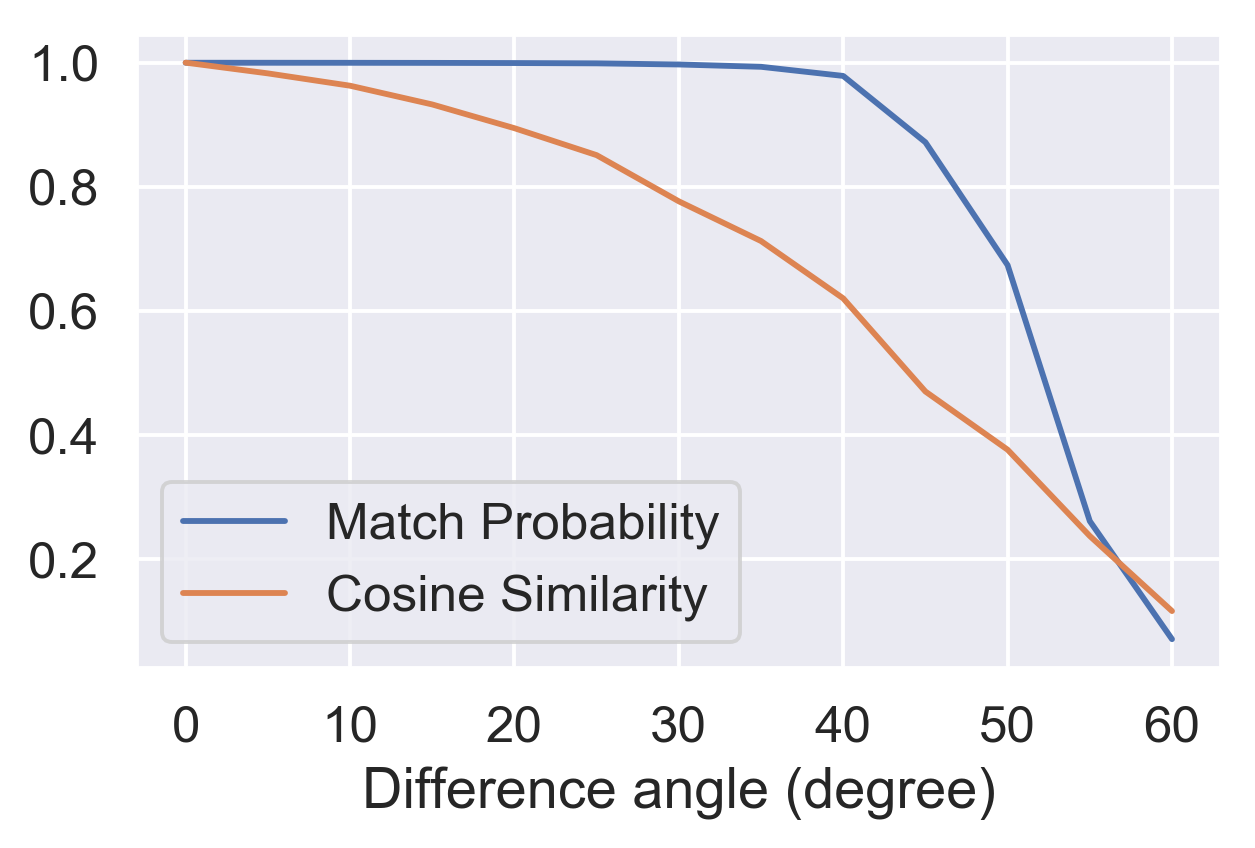

The effect of the face yaw angle on match similarity can be seen in the following figures:

The effect of the face pitch angle on match similarity can be seen in the following figures:

-

class

tfsdk.FaceBoxAndLandmarks¶ -

-

property

landmarks¶ The list of facial landmark points (

Point) in this order: left eye, right eye, nose, left mouth corner, right mouth corner.

-

property

score¶ Likelihood of this being a true positive; a value lower than 0.85 indicates a high chance of being a false positive.

-

property

-

class

tfsdk.Faceprint¶ -

compare(self: tfsdk.Faceprint, arg0: tfsdk.Faceprint) → dict¶

-

property

feature_vector¶ Vector of floats which describe the face.

-

get_quantized_vector(self: tfsdk.Faceprint) → numpy.ndarray[int16]¶ Return the feature vector as a list of 16-bit integers

-

property

model_name¶ Name of model used to generate feature vector.

-

property

model_options¶ Additional options used for generating the feature vector.

-

property

sdk_version¶ SDK version used to generate feature vector.

-

set_quantized_vector(self: tfsdk.Faceprint, arg0: numpy.ndarray[int16]) → None¶ Set the feature vector as a list of 16-bit integers

-